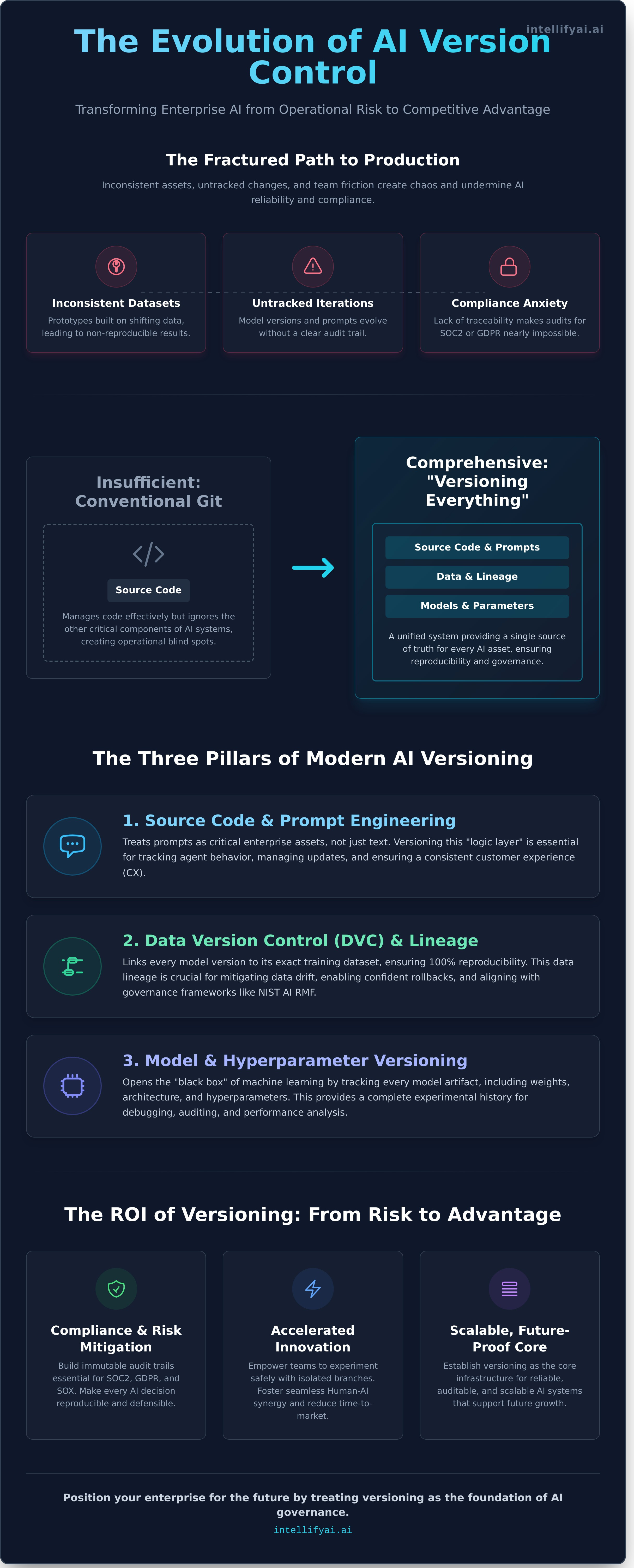

Your most innovative AI models are also your greatest operational risks. The path from prototype to production is fractured by inconsistent datasets, untracked model iterations, and persistent friction between data science and engineering teams. This operational chaos creates significant compliance anxiety and undermines the very reliability of your AI initiatives. The solution is not another complex platform, but a fundamental discipline reimagined for the AI era: enterprise version control.

By 2026, the distinction between market leaders and laggards will be defined by their ability to govern AI at scale. In this article, we deconstruct how modern version control transcends its software development roots to become the critical infrastructure for enterprise AI. Discover the strategic framework for establishing a single source of truth for all your AI assets-from data and models to feature pipelines. We will demonstrate how to build immutable audit trails essential for SOC2 and GDPR compliance and create the seamless Human-AI synergy required to accelerate innovation securely. Prepare to transform your AI governance from a source of risk into your greatest competitive advantage.

Key Takeaways

- Learn why conventional Git workflows are insufficient for MLOps and Agentic AI, and discover the three pillars required for comprehensive AI asset management.

- Reframe your approach to enterprise AI governance by understanding how robust version control directly supports critical compliance frameworks like SOC2, GDPR, and SOX.

- Implement a "Versioning First" culture by standardizing commit messages to reflect business impact, not just technical updates, aligning development with strategic goals.

- Position your enterprise for future growth by treating versioning as the core infrastructure for scalable, reliable, and auditable AI systems.

Understanding Version Control in the Era of Intelligent Automation

In the modern enterprise, operational excellence is built on a foundation of control and traceability. The era of intelligent automation demands more than manual tracking; it requires the systematic management of every change to critical assets, from source code and infrastructure configurations to AI models and datasets. This discipline has evolved from simple file backups into sophisticated, multi-dimensional systems that form the bedrock of high-velocity development and deployment.

For a clear visual breakdown of this foundational concept, watch the video below:

Achieving transformative results with AI is impossible without this level of control. Every iteration of a model, every adjustment to a workflow, and every new data source must be tracked with precision. This systematic management of changes, known as version control, provides an immutable audit trail essential for enterprise accountability. It is the primary prerequisite for building stable, scalable, and compliant AI systems, ensuring that every automated process is both reproducible and defensible.

The Core Mechanism: Tracking Change and Intent

At its heart, versioning captures the "who, what, and why" behind every strategic pivot. Key actions like commits, branches, and merges provide a clear business narrative. A commit is a formal snapshot of progress. A branch allows teams to explore new strategies-like testing a new algorithm-in isolation. A successful experiment is then integrated back into the core system via a merge. This process, coupled with semantic versioning, ensures system stability while fostering rapid innovation.

Local vs. Distributed: A Strategic Comparison

Legacy centralized systems create bottlenecks and single points of failure, ultimately failing the scalability test for global AI initiatives. In contrast, distributed version control empowers every engineer with a full project history, fostering resilience and speed. This topology is purpose-built for the hybrid cloud environments and high-velocity AI engineering that define modern intelligent automation, allowing parallel development streams to advance without friction and ensuring operational continuity.

Beyond Code: The Three Pillars of Modern AI Versioning

The principles of DevOps established a solid foundation for software delivery, but the transition to MLOps and Agentic AI engineering demands a more comprehensive approach. While traditional Git workflows are necessary for managing source code, they are insufficient for the dynamic, multi-faceted nature of intelligent systems. To achieve operational excellence, modern enterprises must adopt a "Versioning Everything" strategy. This holistic version control system future-proofs your AI investments and enhances Human-AI synergy by creating a transparent, auditable record of every change.

This disciplined approach is built on three core pillars:

Source Code and Prompt Engineering

Your autonomous agents and voice interfaces are powered by more than just code; they run on carefully crafted prompts. These prompt libraries are critical enterprise assets that define agent behavior and user interaction. Versioning this "logic layer" is essential for tracking performance, managing updates, and integrating directly with Customer Experience (CX) improvement frameworks to deliver consistent, high-quality outcomes.

Data Version Control (DVC) and Lineage

AI models are inseparable from the data they are trained on. DVC extends versioning principles to the large, unstructured datasets that fuel modern AI. By tracking the evolution of your data, you ensure complete reproducibility-linking every model version to its exact training dataset. This traceability is essential for aligning with governance standards like the NIST AI Risk Management Framework and provides the historical context needed to mitigate data drift or rollback to previous states with confidence.

Model Weight and Hyperparameter Versioning

To manage the "black box" of machine learning, you must version its internal components. This includes the trained model weights and the specific hyperparameters used during training. A robust version control strategy for these artifacts demystifies model behavior and enables seamless A/B testing or canary deployments for new AI agents. Model registries act as the central repository, maintaining a reliable and stable portfolio of production-ready models.

The ROI of Versioning: Risk Mitigation and Compliance

Viewing version control as a mere developer utility is a critical strategic error. In reality, it is a foundational pillar of modern corporate governance and risk management. The misconception that versioning is a "cost center" ignores the immense financial and reputational damage of "versioning debt"-the accumulated risk from untracked, unverified changes in critical systems. This is especially true in enterprise-scale AI, where a single flawed model deployment can have cascading consequences. Implementing a robust versioning strategy transforms compliance from a reactive bottleneck into a proactive, competitive advantage.

Auditability and Regulatory Sight Validation

In an environment of increasing regulatory scrutiny, providing clear evidence of due diligence is non-negotiable. For frameworks like SOC2, GDPR, and Sarbanes-Oxley (SOX), a verifiable, immutable history of every change to code, data, and models is essential. Integrated versioning pipelines automate the collection of these audit logs, demonstrating control and transparency. This capability directly prevents "black box" liability, particularly in financial services, where an inability to explain an automated decision can lead to severe penalties. Versioning provides the definitive record of who changed what, when, and why.

Disaster Recovery and Operational Resilience

Effective version control is the "Undo" button for your entire enterprise technology stack. When a flawed deployment compromises a production AI system, the goal is immediate recovery. Versioning enables rapid, precise rollbacks to a last-known-good state, dramatically reducing Mean Time to Recovery (MTTR) from hours to minutes. This operational resilience also extends to protecting core intellectual property. In the event of a cloud infrastructure failure or cyber-attack, your versioned repositories serve as a distributed, secure backup of your most valuable digital assets, ensuring business continuity.

Implementing Robust Versioning Strategies for Agentic AI

Theoretical understanding of version control is insufficient for enterprise-grade AI. The next step is operationalizing this discipline. This requires embedding a "versioning first" culture across your multidisciplinary teams-from data scientists to business analysts. Every change to a model, prompt, or data pipeline must be captured with intent and clarity. Move beyond purely technical commit messages; standardize a format that reflects business impact, such as "feat: Reduced invoice processing time by 15% with new OCR model."

For maximum efficiency, automate versioning triggers directly within your intelligent automation workflows. When a new document template is validated or a business rule is updated, the system should automatically commit this change to the repository. Integrating a robust version control system with your MLOps pipelines creates a seamless, auditable, and resilient foundation for continuous monitoring and improvement. This transforms versioning from a manual task into a strategic, automated asset.

Best Practices for Distributed AI Teams

To maintain velocity without sacrificing stability, define a clear branching strategy. While GitFlow offers a structured release process suitable for major model deployments, Trunk-Based Development provides the agility needed for rapid iteration on prompts and agent behaviors. Enforce mandatory peer reviews for all critical changes to ensure quality and knowledge sharing. Use tags and baselines to create immutable milestones, marking specific versions of your AI systems as production-ready or validated for client deployment.

Tooling and Infrastructure Selection

Your choice of tooling is a long-term strategic decision. Evaluate your infrastructure to ensure it supports the unique demands of AI development and prepares you for the future of autonomous agent orchestration.

- Git-Based Platforms: Standardize on an enterprise-grade platform like GitHub or GitLab for their robust security, access controls, and integration capabilities essential for protecting sensitive models and data.

- Specialized Tooling: Augment Git with specialized tools like DVC (Data Version Control) or Git LFS to efficiently manage large-scale datasets and model artifacts that traditional systems cannot handle.

- Future-Proofing Your Stack: The rise of Agentic AI requires a forward-thinking approach to your entire technology stack. Building a scalable, versioned foundation is a core principle of the bespoke integrations we design at Intellify AI.

- User Interface and Experience: The final layer of your stack is the user-facing application. Partnering with a creative web design agency is crucial for translating complex AI functionalities into an intuitive and engaging customer experience. For an example of a firm that excels in this area, you can discover Insight Multimedia.

How IntellifyAi Future-Proofs Your Enterprise Governance

Understanding the principles of version control is the first step. Implementing it across a complex AI ecosystem is the strategic challenge where enterprises succeed or fail. IntellifyAi acts as the Strategic Architect for your AI modernization, moving beyond theory to execution. Our Agentic AI engineering services bake versioning directly into every deployment, ensuring your AI assets are traceable, reproducible, and auditable from day one. We transform governance from a reactive necessity into a proactive, competitive advantage.

Bespoke AI Strategy and Governance Consulting

We develop custom roadmaps for AI asset management that bridge the gap between legacy modernization and cutting-edge automation. Our consulting ensures your AI strategy is not only powerful but also scalable, secure, and compliant by design. We build the framework for durable AI governance, enabling you to innovate with confidence and control.

The i_Nova Advantage in Document Intelligence

Our flagship platform, i_Nova, excels in managing document versions across unstructured formats, a critical challenge in enterprise AI. By embedding intelligent workflow orchestration, i_Nova delivers operational excellence and a single source of truth for your most vital data. Ready to build a resilient foundation for your intelligent automation? Contact us to modernize your enterprise AI infrastructure.

The complexity of managing models, data, and code simultaneously can overwhelm internal teams. This is why our MLOps managed services are integral to future-proofing your operations. We handle the intricate backend processes, implementing enterprise-grade version control for your entire machine learning lifecycle. This allows your teams to focus on high-value innovation, secure in the knowledge that their AI ecosystem is stable, governed, and built for sustained growth.

Secure Your AI Trajectory: The Imperative of Enterprise Versioning

The path to 2026 is defined by intelligent automation, and the foundational pillar of this transformation is clear: enterprise-grade AI governance. This requires expanding our perspective beyond code management to a holistic strategy that encompasses models, data, and agentic workflows. A robust framework for version control is not merely a technical requirement; it is the strategic mechanism that ensures auditability, mitigates operational risk, and unlocks scalable, predictable ROI from your AI investments.

Building this future-proof foundation requires a partner with proven expertise in workflow orchestration. IntellifyAi delivers operational excellence through our proprietary i_Nova IDP platform, backed by ISO and SOC2 compliant frameworks. With a global presence across the UK, US, and India, we are positioned to architect your governed AI ecosystem. Partner with IntellifyAi to build a governed, scalable AI future. Embrace the synergy of human ingenuity and intelligent automation, and position your enterprise for transformative growth.

Frequently Asked Questions

What is the difference between version control and source control?

Source control is a subset of version control, focused exclusively on managing source code. Version control is a broader strategic discipline that tracks changes to all project assets, including datasets, models, and configuration files. For enterprise AI, this distinction is critical. To achieve true reproducibility and operational excellence, you must version the entire digital ecosystem, not just the code that builds it.

Why is version control important for AI and machine learning?

In AI development, reproducibility is paramount. Version control provides an immutable, time-stamped record of not just code, but also the datasets, models, and hyperparameters used in every experiment. This is the foundation of MLOps, enabling teams to reliably retrain, deploy, and roll back models. It transforms AI from an experimental process into a scalable, auditable, and enterprise-grade operational capability.

Can version control help with GDPR and SOC2 compliance?

Yes, it is an essential component. Compliance frameworks like GDPR and SOC2 mandate strict auditability and governance. Version control delivers a transparent, chronological log of every change to data, models, and access policies. This provides the concrete evidence needed to demonstrate control over data lineage and system integrity, simplifying audits and solidifying your security posture against regulatory scrutiny.

How does versioning work for unstructured data in IDP platforms?

In Intelligent Document Processing (IDP), versioning tracks changes to both the extraction models and the documents they process. It logs updates to parsing logic, annotation guidelines, and the training data itself. This dual-tracking system allows you to revert to a previous model if performance degrades or trace a processed document back to the exact model version that handled it, ensuring complete traceability and quality control.

Is Git the only option for enterprise version control in 2026?

While Git excels at managing source code, it is not optimized for the large-scale datasets and models central to AI. By 2026, the enterprise standard will be a hybrid approach. Leading organizations will use Git for code alongside specialized tools like DVC or Pachyderm for data and model versioning. This layered strategy is the only way to effectively manage the complexity of modern AI workflows.

What happens if an enterprise ignores version control in AI development?

Ignoring version control introduces profound business risk. It makes results irreproducible, blocks effective debugging, and renders audits impossible. Collaboration breaks down, development velocity stalls, and the AI system becomes an unmanageable "black box." This fundamentally undermines scalability and reliability, turning a strategic AI investment into a costly operational liability that cannot deliver consistent ROI.

How does versioning improve the customer experience (CX) in AI agents?

Versioning enables a systematic, data-driven approach to enhancing AI agent performance, which directly impacts CX. Teams can safely A/B test new conversational flows or updated models, measure their effect on customer satisfaction, and instantly roll back any changes that degrade the experience. This disciplined process ensures every update is a deliberate improvement, leading to a more consistent, reliable, and intelligent customer interaction.

What is the role of human-AI synergy in version management?

Version management is a critical enabler of human-AI synergy. It creates a shared, transparent history that both human experts and autonomous systems can reference and act upon. Humans use this history to provide strategic oversight and debug complex issues. AI systems leverage it for automated retraining and validation. This creates a powerful feedback loop where human intellect guides AI evolution toward defined business goals.

.svg)