A high-performing model in a development environment is a promise of value. A model that fails, degrades, or requires a slow, manual deployment process is a costly reality for too many enterprises. This disconnect between research and production undermines ROI and throttles innovation, stemming from a single, critical gap: the absence of a systematic, automated framework for operationalizing machine learning. True scalability and reliability are not achieved by simply deploying a model; they are engineered through intelligent, end-to-end MLOps pipelines.

This definitive 2026 guide moves beyond abstract theory to deliver a strategic blueprint for execution. We will equip you with the principles to design, build, and manage robust automations that generate tangible business outcomes. You will gain a clear understanding of each pipeline stage, a framework for choosing the right level of workflow orchestration for your organization, and the confidence to transform ad-hoc experiments into a seamless engine for continuous, predictable value.

Key Takeaways

- Pinpoint the exact reasons ad-hoc ML deployments fail and learn to bridge the critical gap between development and production value.

- Master the four key stages of production-grade mlops pipelines to build a repeatable, automated system for delivering consistent business value.

- Assess your organization’s current ML maturity and map a clear, strategic path from manual processes to full CI/CD for ML.

- Define the criteria for choosing a strategic partner to architect your MLOps capability, ensuring long-term scalability and operational excellence.

Why Ad-Hoc ML Deployments Fail: The Business Case for Pipelines

The vast majority of machine learning models deliver zero business value. Industry reports indicate that over 80% of AI projects never make it into production, stalling in an experimental phase. This failure stems from the critical gap between a data scientist’s notebook and a live, operational environment. Ad-hoc, manual deployments are brittle and cannot withstand the pressures of a dynamic business landscape.

Models fail in production not because of poor algorithms, but because of operational breakdown. The core technical failure points directly translate into significant business risk:

- Data Drift: The statistical properties of the input data change over time, rendering the model's predictions inaccurate and unreliable.

- Model Decay: The model's predictive power erodes as the real-world patterns it was trained on become obsolete.

- Lack of Reproducibility: Without versioned data, code, and parameters, it is impossible to debug failures, pass audits, or reliably retrain a model.

These technical issues create tangible consequences, including lost revenue from flawed recommendations, severe compliance risk from unexplainable decisions, and millions in wasted R&D investment.

The Hidden Costs of Manual ML Operations

Manual ML deployments are an exercise in inefficiency. Every manual handoff introduces delay, costing you market opportunity as competitors move faster. Worse, a stale model making decisions on outdated information is not a neutral asset; it is an active liability. This process also misallocates your most valuable technical talent, forcing elite data scientists to perform manual DevOps tasks instead of driving innovation. The operational drag becomes a direct tax on growth.

From Technical Debt to Strategic Asset

The solution is to transform this technical debt into a strategic asset through systematic automation. This is the central purpose of MLOps pipelines-a set of practices designed to make machine learning delivery predictable, reliable, and scalable. For a foundational overview of its principles, you can reference this community-vetted guide on What is an MLOps Pipeline? Core Principles of Automation. By implementing robust mlops pipelines, you convert ML from a high-risk research activity into a core driver of operational excellence and competitive advantage.

What is an MLOps Pipeline? Core Principles of Automation

An MLOps pipeline is the operational backbone of a modern machine learning system. It is an automated workflow that orchestrates the end-to-end process of building, testing, deploying, and monitoring ML models. Its purpose is to achieve operational excellence by making the ML lifecycle predictable, reliable, and scalable. Unlike traditional software CI/CD, which focuses on application code, MLOps must manage the dual complexities of code, data, and models as a single, cohesive unit. This demands a foundation built on three core principles: ruthless automation, absolute reproducibility, and seamless collaboration.

The 'Continuous Everything' Framework: CI/CD/CT/CM

Effective mlops pipelines extend the DevOps paradigm with concepts unique to the machine learning lifecycle. This "Continuous Everything" framework ensures that models remain relevant and performant long after their initial deployment. Understanding the complete Anatomy of a Production-Grade MLOps Pipeline: 4 Key Stages is critical for implementation.

- Continuous Integration (CI): This goes beyond code. CI in MLOps involves automatically testing and validating data, schemas, and model components to ensure system integrity with every change.

- Continuous Delivery (CD): CD automates the deployment of a trained model or the entire ML training service, delivering a reliable and repeatable release process that minimizes manual intervention.

- Continuous Training (CT): A crucial differentiator, CT automatically retrains models on new data. This proactive process combats model decay and ensures predictions remain accurate as real-world patterns evolve.

- Continuous Monitoring (CM): Post-deployment, CM tracks live model performance against both technical metrics (e.g., latency, error rates) and business KPIs, triggering alerts or retraining pipelines when performance degrades.

Key Stakeholders and Their Roles

A successful pipeline is not just a technical artifact; it is a collaborative platform that aligns diverse teams toward a common goal. This structure enables Human-AI Synergy by allowing each expert to focus on their highest-value work.

- Data Scientists: Focus on experimentation, feature engineering, and model algorithm selection. The pipeline empowers them to iterate rapidly and test hypotheses in a production-like environment.

- ML Engineers: Architect, build, and maintain the pipeline infrastructure. They translate data science prototypes into robust, scalable, and automated production systems.

- DevOps/IT Professionals: Manage and secure the underlying infrastructure. They provide the stable compute, storage, and networking resources that the entire MLOps lifecycle depends on.

Anatomy of a Production-Grade MLOps Pipeline: 4 Key Stages

A production-grade machine learning model is not a static artifact; it is the output of a dynamic, automated system. Effective mlops pipelines orchestrate the flow from raw data to business value, ensuring reproducibility, scalability, and reliability. This process is best understood as a sequence of four interconnected, automated stages designed for operational excellence.

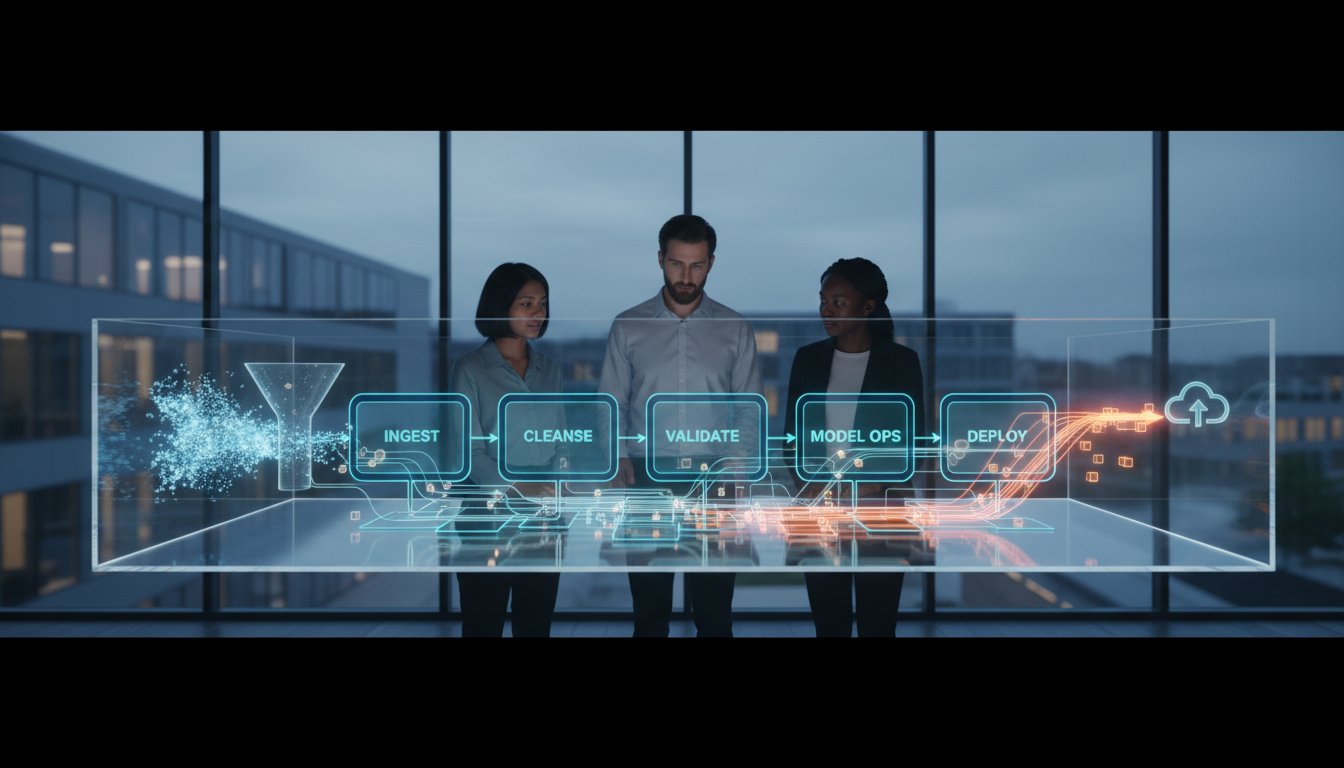

[Visual Diagram: A flowchart illustrating the four stages from Data Ingestion to Monitoring, with arrows indicating the feedback loop from monitoring back to data ingestion.]

Figure 3.1: The Continuous Lifecycle of an MLOps Pipeline

Stage 1: Data Ingestion and Validation

The pipeline begins with automated data extraction from sources like data lakes or enterprise warehouses. This stage is critical for establishing a trustworthy foundation. We implement automated validation checks to detect data quality issues, schema changes, and distribution shifts before they corrupt the model. To ensure full reproducibility, datasets are version-controlled using tools like DVC (Data Version Control), treating data with the same rigor as code.

Stage 2: Model Training, Tuning, and Validation

Here, the system automates model training and hyperparameter tuning to find the optimal configuration. Every experiment-including parameters, code versions, and results-is meticulously tracked with platforms like MLflow. This creates an auditable record of model lineage. Crucially, model validation moves beyond simple accuracy to focus on business-relevant metrics, ensuring the final model candidate delivers tangible ROI.

Stage 3: Model Deployment and Serving

Once validated, the model is packaged into a portable container using Docker for consistent execution across environments. We then employ strategic deployment methods to minimize risk and ensure a seamless transition to production:

- Canary Deployments: Route a small percentage of traffic to the new model.

- Blue-Green Deployments: Maintain two identical environments for instant rollback.

- Shadow Deployments: Run the new model in parallel with the old to test performance without impacting users.

The model is then served via a scalable API endpoint, often managed by an orchestrator like Kubernetes to handle variable loads.

Stage 4: Performance Monitoring and Feedback Loop

A deployed model requires continuous vigilance. This final stage establishes a robust monitoring system to track both operational health (latency, error rates) and model performance (data and concept drift). Automated alerts immediately flag any degradation. This creates a powerful feedback loop, capturing new production data to trigger the continuous training (CT) of future model versions, future-proofing your AI investment.

Designing Your MLOps Pipeline: From Simple Automation to Full CI/CD

Operationalizing machine learning is an evolutionary process, not a monolithic task. The perception of overwhelming complexity often stalls progress, but the key is to assess your organization's current maturity and build incrementally. A full CI/CD system is the destination for some, but effective mlops pipelines begin with strategic automation. This framework helps you identify your position and define the next logical step toward operational excellence.

Most teams begin at Level 0: The Manual Process, where data scientists handle everything from data preparation to model deployment by hand. This is effective for initial exploration but is not scalable or repeatable. Recognizing this is the first step toward intelligent automation.

Level 1: The Automated ML Pipeline

This level focuses on automating the core ML workflow: model retraining and deployment. It is the ideal target for teams with stable, well-defined use cases that require periodic updates. The goal is to create a repeatable and reliable training process. Key components include a workflow orchestrator like Apache Airflow or Kubeflow to manage the steps, alongside robust experiment tracking to ensure model lineage and reproducibility.

Level 2: The Full CI/CD Pipeline

This represents full MLOps maturity, where the entire ML application is treated as a software system. It automates not just model retraining but also the continuous integration and delivery of new features, tests, and pipeline components. This is essential for mature ML teams managing multiple models with rapid development cycles. Foundational components include source control (Git), automated build and test services, and container registries.

To architect your path forward, answer these critical questions:

- What is the primary trigger for model retraining-a fixed schedule, data drift detection, or new code?

- How will we version our datasets, model artifacts, and source code to ensure full traceability?

- What are the specific quality and business metric gates for promoting a model to production?

- How will we implement post-deployment monitoring for both system health and model performance?

Critical Design Considerations: Governance and Security

Enterprise-grade mlops pipelines must be built on a foundation of security and governance. Your architecture must incorporate automated security scanning for code dependencies and container images. Implement strict role-based access controls (RBAC) and maintain immutable audit trails for every model deployment. Designing for compliance from day one ensures your system is not only efficient but also secure and trustworthy.

Building Your MLOps Capability with a Strategic Partner

As we've explored, constructing performant and scalable mlops pipelines is not merely a technical task-it is a strategic imperative. The framework outlined in this article provides the blueprint, but execution requires deep expertise across data engineering, cloud infrastructure, and model governance. Attempting this journey alone often leads to fragmented systems, technical debt, and a delayed return on your AI investment.

This is where a strategic architect becomes essential. IntellifyAi operates not as a tool vendor, but as your dedicated partner in building a resilient, end-to-end MLOps capability. We bridge the gap between your business objectives and the complex technical reality of deploying and managing machine learning in production.

End-to-End MLOps Engineering and Consulting

Our services are designed to map directly to the MLOps lifecycle, ensuring a seamless and integrated solution from concept to continuous operation. We provide the strategic oversight and hands-on engineering to build the intelligent automation backbone your enterprise requires.

- AI Strategy & Consulting: We begin by aligning your AI initiatives with core business goals, designing a bespoke MLOps architecture that is both powerful and pragmatic.

- Data Engineering: Our experts engineer the robust data foundations-from ingestion and processing to feature stores-that are critical for reliable model performance at scale.

- Managed Services: We ensure your production models deliver sustained value through proactive monitoring, continuous optimization, and expert management of your operational pipelines.

Accelerate Your Path to MLOps Maturity

Partnering with IntellifyAi allows you to bypass the costly and time-consuming trial-and-error phase of MLOps development. By leveraging our proven architectures and deep industry experience, you gain immediate access to best practices and future-proof solutions.

Empower your data scientists to focus on what they do best: building innovative models that drive business value. We manage the underlying infrastructure, allowing your team to innovate without the burden of operational complexity. Transform your AI vision into a tangible competitive advantage, faster.

Architect your enterprise MLOps pipeline with an IntellifyAi expert.

Orchestrate Your Future: The Strategic Imperative of Automation

The era of ad-hoc machine learning is over. Enterprise success in 2026 and beyond depends on a transition from isolated models to robust, automated systems. As we've detailed, effective mlops pipelines are not just technical frameworks; they are strategic assets built on a deep understanding of core stages-from data ingestion to continuous monitoring. This commitment to operational excellence transforms AI initiatives from high-risk experiments into a scalable engine for continuous, predictable value delivery.

Designing and implementing this level of intelligent automation is a defining enterprise challenge. Partnering with a specialist accelerates your path to maturity and mitigates risk. At IntellifyAi, we translate complex AI potential into tangible business results. Our expertise in Agentic AI Engineering, Cloud-Native & Enterprise Modernization, and strategic consulting for global enterprises provides the architectural foundation for your success. Take the definitive step toward operational excellence. Schedule a consultation to build your enterprise MLOps strategy.

The future of your industry is being written with intelligent automation. It's time to take control of the narrative.

Frequently Asked Questions

What is the difference between MLOps and DevOps?

DevOps automates the software delivery lifecycle. MLOps adapts these principles for machine learning but adds critical layers of complexity. While DevOps focuses on code and infrastructure, MLOps must also manage the lifecycle of data and models. It introduces practices like data versioning, continuous training (CT), and model monitoring to handle the experimental and data-dependent nature of AI systems. This ensures reproducibility, governance, and sustained performance in a way that traditional DevOps cannot.

What are the essential stages of an ML pipeline?

Essential MLOps pipelines automate the end-to-end machine learning lifecycle. Core stages begin with Data Ingestion and Validation to ensure data quality. Next are Feature Engineering to prepare data for the model and Model Training itself. The pipeline then proceeds to Model Evaluation to assess performance against key business metrics. Finally, Model Deployment makes the validated model available for live predictions, ensuring a repeatable and scalable process for operational excellence.

What are the most common tools used for building MLOps pipelines?

Building robust MLOps pipelines requires a strategic toolset. For workflow orchestration, teams often use Apache Airflow, Kubeflow, or cloud-native solutions like AWS Step Functions. Data and model versioning are commonly managed with DVC (Data Version Control) and Git. For experiment tracking and model management, MLflow and Weights & Biases are industry standards. This combination of tools provides a comprehensive framework for automating and managing the entire ML lifecycle effectively.

How do you monitor an ML model in production?

Monitoring a production model involves two distinct layers. First, track operational metrics like latency, throughput, and error rates using tools like Prometheus to ensure service health. Second, and more critically, monitor for model performance degradation. This includes tracking data drift (changes in input data distribution) and concept drift (changes in the relationship between inputs and outputs). This continuous validation is essential for maintaining model accuracy and business value over time.

What is CI/CD for machine learning?

CI/CD for machine learning extends traditional DevOps practices to accommodate models and data. Continuous Integration (CI) expands beyond code testing to include automated data validation, feature logic tests, and model training checks. Continuous Delivery (CD) automates the deployment of an entire ML pipeline, not just an application. This creates a seamless, trigger-based system to retrain and deploy new models when code or data changes, ensuring models remain relevant and performant.

Is Kubernetes necessary for MLOps?

Kubernetes is not strictly necessary, but it is a powerful enabler for scalable and portable MLOps. It provides a standardized, resilient environment for containerized ML workloads, simplifying resource management and deployment across different cloud or on-premise infrastructures. For smaller-scale projects or teams preferring managed solutions, platforms like AWS SageMaker, Google AI Platform, or Azure Machine Learning can provide sufficient orchestration without the overhead of managing a Kubernetes cluster directly.

How do you version control machine learning models and data?

Version control for ML is critical for reproducibility. Code is versioned using Git. For data, which is often too large for Git, tools like DVC (Data Version Control) or Git LFS are used; they track pointers to data stored elsewhere. Models are versioned in a model registry, such as MLflow or a cloud provider’s native solution. The registry stores model artifacts, parameters, and performance metrics, creating an auditable history of every model produced.

.svg)